This book, Data mesh: Delivering Data-Driven Value at Scale by Zhamak Dehghani essentially covers what I have been working on for the last 6 months or so, therefore it is highly relevant but I perhaps have to be slightly cautious in what I write because of commercial confidentiality.

The Data Mesh is a new design for handling data within an organisation, it has been developed over the last 3 or 4 years with Dehghani at the Thoughtworks consultancy at the core. Given its recency there are no Data Mesh products on the market so one is left build your own on the basis of components available.

To a large degree the data mesh is a conceptual and organisational shift rather than a technical shift, all the technical component parts are available for a data mesh, less programmatic glue to hold the whole thing together.

Data Mesh the book is divided into five parts, the first describes what a data mesh is in fairly abstract terms, the second explains why one might need a data mesh, the third and fourth parts about how to design the architecture of the data mesh itself, and the data products that make it up. The final part is on “How to get started” – how to make it happen in your organisation.

Dehghani talks in terms of companies having established systems for operational data (data required to serve customers and keep the business running such billing information and the state of bank accounts), the data mesh is directed at analytical data – data which is derived from the operational data. She uses a fictional company, Daff, Inc. that sounds an awful lot like Spotify to illustrate these points. Analytical data is used to drive machine learning recommender systems, for example, and better understanding of business, customer and operations.

The legacy data systems Data Mesh describes are data warehouses and data lakes where data is managed by a central team. The core issue this system brings is one of scalability, as the number of data sets grows the size of the central team grows, and the responsiveness of the system drops.

The data mesh is a distributed solution to this centralised system. Dehghani defines the data mesh in terms of four principles, listed in order of importance:

- Domain Ownership – this says that analytical data is owned by the domains that generate it rather than a centralised data team;

- Data as a product – analytical data is owned as a product, with the associated management, discoverability, quality standards and so forth around it. Data products are self-contained entities in their own right – in theory you can stand up the infrastructure to deliver a single data product all by itself;

- Self-serve data platform – a self-serve data platform is introduced which makes the process of domain ownership of data products easier, delivering the self-contained infrastructure and services that the data product defines;

- Federated computational governance – this is the idea that policies such as access control, data retention, encryption requirements, and actions such as the “right to be forgotten” are determined centrally by a governance board but are stored, and executed, in machine-readable form by data products;

For me the core idea is that of a swarm of self-contained data products which are all independent but by virtue of simple behaviours and some mesh spanning services (such as a data catalogue) provide a sum that is greater than the whole. A parallel is drawn here with domain-driven design and microservices, on which the data mesh is modelled.

I found the parts on designing the data mesh platform and data products most interesting since this is the point I am at in my work. Dehghani breaks the data mesh down into three “planes”: the infrastructure utility plane, the data product experience plane, and the mesh experience plane (this is where the data catalogue lives).

We spent some time worrying over whether it was appropriate to include data processing functionality in our data mesh – Dehghani makes it clear that this functionality is in scope, arguing that the benefit of the data product orientation is that only a small number data pipelines are managed together rather than hundreds or possibly thousands in a centralised scheme.

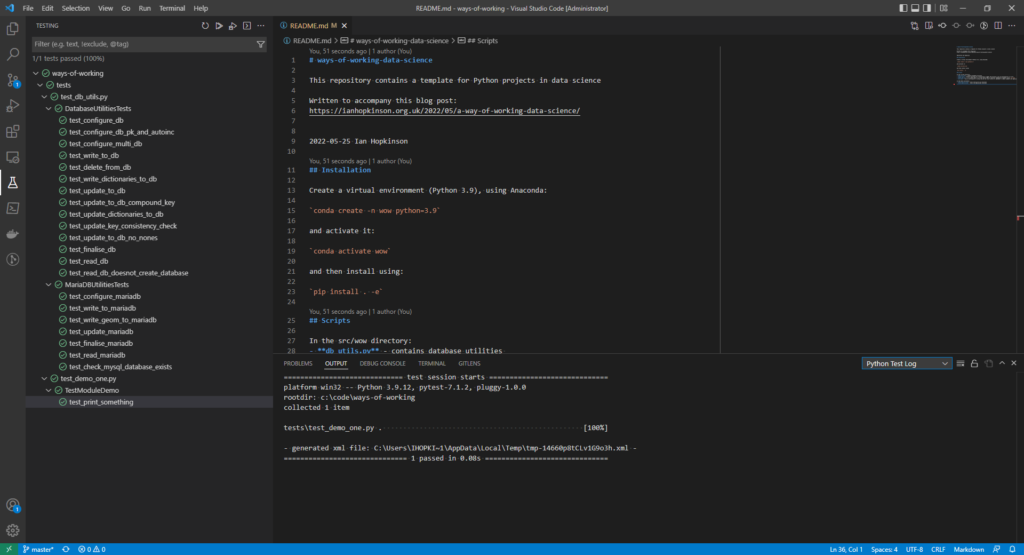

I have been spending my time writing code, which Dehghani describes as the “sidecar”, common code that sits inside the data product to provide standard functionality. In terms of useful new ideas, I have been worrying about versioning of data schema and attributes – Dehghani proposes that “bitemporality” is what is required here (see Martin Fowler’s blog post here for an explanation). Essentially bitemporality means recording the time at which schema and attributes were changed, as well as the time at which data was provided and recording the processing time. This way one can always recreate a processing step simply by checking which set of metadata and data were in play at the time (bar data being deleted by a data retention policy).

Data Mesh also encouraged me to decouple my data catalogue from my data processing, so that a data product can act in a self-contained way without depending on the data catalogue which serves the whole mesh and allows data to be discovered and understood.

Overall, Data Mesh was a good read for me in large part because of its relevance to my current work but it is also well-written and presented. The lack of mention of specific technologies is rather refreshing and means the book will not go out of date within the next year or so. The first companies are still only a short distance into their data mesh journeys, so no doubt a book written in five years time will be a different one but I am trying to solve a problem now!