In a break from usual service I am reviewing a “computer game”, Black Myth Wukong by Game Science Interactive Technology. I started gaming in the early eighties when I was an early teenager, I think there was a bit of a break when I went to university then I continued into my early thirties (around 2000). There was then a pause until a while after my son was born, we got a PlayStation 5 in Christmas 2021 “for him”.

Since then my favourite games have been Horizon Zero Dawn, Horizon Forbidden West, Elden Ring, Lies of P and Ghost of Tsushima. I bought Black Myth Wukong with my Christmas money – a child once again! In common with my other favourite games it falls into the category of “action role-playing” game.

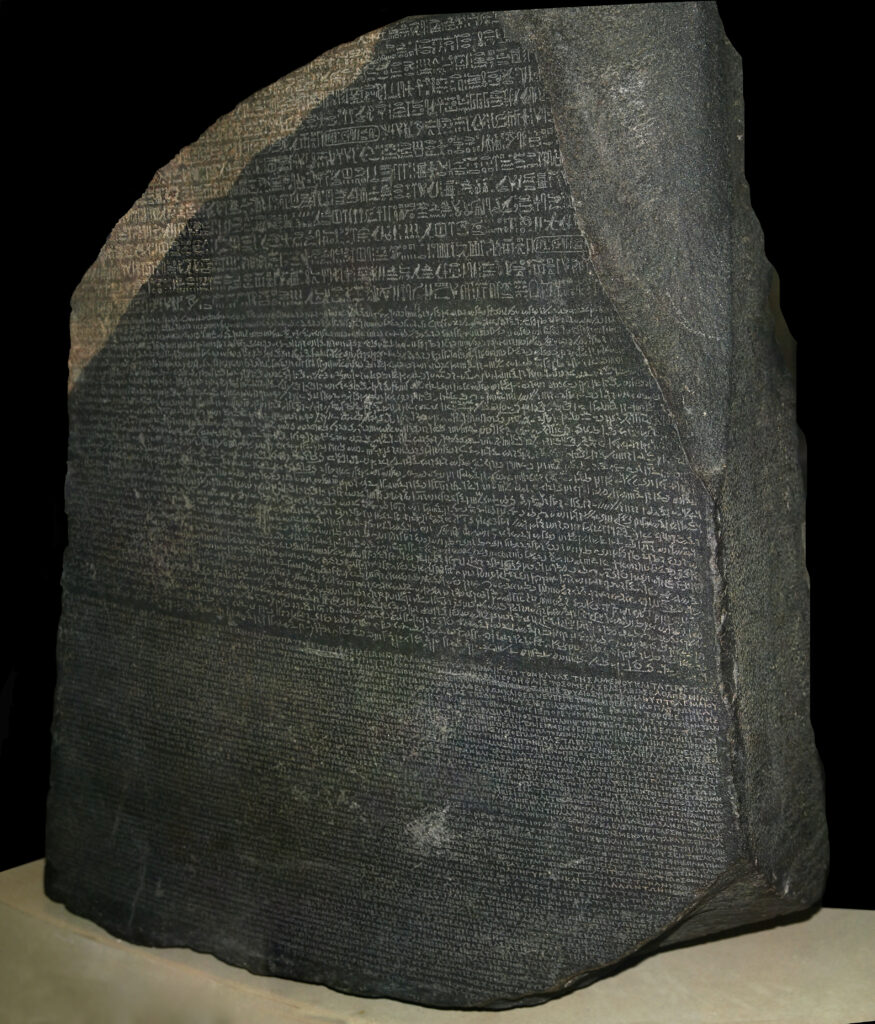

Black Myth Wukong is based on Journey to the West, a 16th century Chinese novel which I know from an eighties TV series which I remember by its short name “Monkey”. In the game you play the part of “the Destined One” (a monkey) whose task is to retrieve the six relics of Sun Wukong.

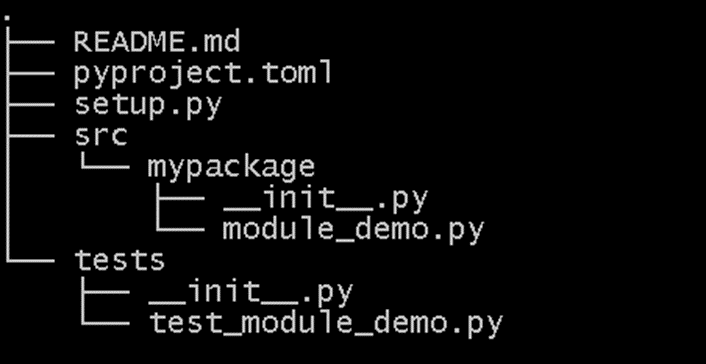

The action takes place over 6 chapters, it is closest in style to Lies of P, that’s to say the chapters involve a roughly linear path with battles with minor characters who respawn and bosses who you must defeat to progress. Defeating a character brings rewards, “will” which is the unit of progress used to upgrade your character and buy upgrades and consumables and also items. Fighting is action rather than turn based. Your weapons are a trusty staff (which can be replaced and upgraded through the games), and various spells which fall into several categories: active spells, defensive spells, transformations, spirits, vessels. There’s a huge range of spells and so forth to choose from. My favourite is “Pluck of Many” which summons a posse of replica monkeys to fight for you (but only for a brief period).

Black Myth uses the traditional trifecta of health, stamina and some consumable spell substance (Mana in this case) to indicate your current status. A couple of vessels and spirits are tied to a second mystical substance, Qi and spells have a cooldown period so you have to wait to use them again. Health is recovered by drinking from your gourd which contain a variety of upgradable drinks and “soaks” which have various effects. I think it’s best to think of the “soaks” as teabags! You can also collect a variety of modifying relics which can be equipped to boost various characteristics.

I liked the upgrade and progress mechanics, there are extensive skill trees but you can re-allocate “sparks” freely at the save points (shrines). Dying does not lose your accumulated will, which is an irritating feature of Elden Ring and similar. I have died futilely so many times in Elden Ring attempting to retrieve my accumulated experience points.

The graphics in Black Myth are stunning, a step above even Elden Ring and Lies of P which are excellent. This will be down, in part, to Unreal Engine 5. The chapters also have quite different visual styles – the first chapter, set on Black Wind Mountain is lush forest, the second Yellow Wind Ridge is scorched desert, the third the New West is snowy mountains, the fourth The Webbed Hollow is a creepy, cavernous underground environment, the fifth Flaming Mountains is a scorched volcanic moonscape, the final chapter Mount Huaguo is a mountainous, forested open world. In addition there are a couple of “secret” areas which are accessed by completing quest lines. The gameplay also varies a bit with chapter with some chapters like Yellow Wind Ridge and The Web Hollow feeling close to open world.

Your enemies are well-designed and have a very wide range of attacks, your own spells are very varied and both are rendered beautifully. I found the dodging animations particularly satisfying. I thought the in-game dialogue and interactions with characters was pretty good. Games Science is a Chinese studio and were a couple of places where translation seemed slightly odd (I’m thinking of the “non-white” and “non-able” bosses).

There is no difficulty level selection, so if you are struggling with a boss to progress then you have to “git gud” which is sometimes a pain. I have a sneaking suspicion that some of those most challenging bosses are amenable to tactics which you simply need to find rather than being straightforward battles of skill and reflex. Fall damage is not an issue until it is, in parts of Chapter 3! There is very limited parrying in Black Myth, which some will miss.

I got about a month of play out of Black Myth for the first run through, amounting to 98 hours gameplay but there is New Game+ to play and a couple of challenge features where you can refight bosses, this is well used to beat those foes that you first struggled with early in the game.

Overall I loved Black Myth Wukong, I can’t wait for the rumoured DLC