Author's posts

Feb 17 2016

Book Review: The Honourable Company by John Keay

I’ve been reading a lot of books about naturalists who have been on great expeditions: Alexander von Humboldt, Charles Darwin, Joseph Banks and the like. This book, The Honourable Company by John Keay, is a bit of a diversion into great expeditions for commercial purposes. Such expeditions form the context, and “infrastructure” in which scientific expeditions take place. The book is a history of the English East India Company, founded in the early 17th century with a charter from the English sovereign to conduct trade in the Far East (China, Japan, Java) and India.

I’ve been reading a lot of books about naturalists who have been on great expeditions: Alexander von Humboldt, Charles Darwin, Joseph Banks and the like. This book, The Honourable Company by John Keay, is a bit of a diversion into great expeditions for commercial purposes. Such expeditions form the context, and “infrastructure” in which scientific expeditions take place. The book is a history of the English East India Company, founded in the early 17th century with a charter from the English sovereign to conduct trade in the Far East (China, Japan, Java) and India.

It is somewhat chastening to realise the merchants had been exploring the world for one hundred years (and the Spanish and Portuguese for nearer 200 years) before the scientific missions really got going in the 18th century.

The book is divided into four parts each covering periods of between 40 and 80 years, within each part there is a further subdivision into geographical areas: the East India Company had interests at one time or another from Java, Japan and China in the Far East to Calcutta, Bombay and Surat in India to Mocha in the Middle East.

The East India Company was chartered in 1600, following the pattern of the (slightly) earlier Muscovy and Levant Companies which sought a North West passage to the Far East and trade with Turkey respectively. At the time the Spanish and Portuguese were dominating long distant trade routes. The Dutch East India Company was formed shortly after the English, and would go on to be rather more successful. The Company offered investors the opportunity to combine together to fund a ship on a commercial journey. The British Crown gave the Company exclusive rights to arrange such trade expeditions.

Initially the aim was to bring back lucrative spices from the Far East, in practice the trade shifted to India initially and in its later years, to China and the import of tea. The Dutch were more military assertive in the Far East where spices like nutmeg and pepper were sourced.

Once again I’m struck by the amount of death involved in long distance expeditions. It seems western Europeans had been projecting themselves across the oceans with 50% mortality rates from sometime in the early 16th century to the end of the 18th century. For the East India company, many of their factors – the local representatives – were to die in their placements of tropical diseases.

In the early years investors bought into individual expeditions with successive expeditions effectively competing with each other for trade, this was unproductive and subsequently investment was in the Company as a whole. Although it is worth noting that even in the later years of the Company in India the different outposts in Madras, Bombay and so forth were not averse to acting independently and even in opposition to each others will, if not interests. Alongside the Company’s official trade the employee’s engaged in a great deal of unofficial activity for their own profit, this was known as the “country trade”.

The East India Company’s activities in India led to the British colonisation of the country. For a long time the Company made a fairly good effort at not being an invading force, basically seeing it as being bad for trade. This changed during the first half of the 18th century where the Company became increasingly drawn into military action and political intrigue either with local leaders against third parties or in proxy battles with other European powers with which the home country was at war. Ultimately this lead to the decline of the Company since the British Government saw them acting increasingly as a colonial power and saw this as their purview. This was enacted in law through the Regulating Act in 1773 and East India Company Act of 1784 which introduced a Board of Control overseeing the Company’s activities in India.

Keay is very much focussed on the activities of the Company, the records it kept and previous histories, so it is a little difficult to discern what the locals would have made of the Company. He comments that there has been a tendency to draw a continuous thread from the early trading activities of the Company to British India in the mid-19th century and onwards but seems to feel these links are over-emphasised.

India is the main focus of the book despite the importance of China, tea and the opium trade in the later years which is covered only briefly in the last few pages. I must admit I found the array of places and characters a bit overwhelming at times, not helped by my slightly vague sense of Indian geography. Its certainly a fascinating subject and it was nice to step outside my normal reading.

Jan 30 2016

Book review: Pro Git by Scott Chacon and Ben Straub

Pro Git by Scott Chacon and Ben Straub is available to download for free, or to read online at the website but you can buy a paper copy if you prefer. I downloaded an read it on my tablet. Pro Git is the bible of all things relating to git, the distributed version control system. This is an application to record the history of changes to your computer code, or any other plain test file. Such applications are essential if you are a software company producing code commercially, or if you are collaborating on an open source project. They are also useful, if you use code in analysis or modelling, as I do.

Pro Git by Scott Chacon and Ben Straub is available to download for free, or to read online at the website but you can buy a paper copy if you prefer. I downloaded an read it on my tablet. Pro Git is the bible of all things relating to git, the distributed version control system. This is an application to record the history of changes to your computer code, or any other plain test file. Such applications are essential if you are a software company producing code commercially, or if you are collaborating on an open source project. They are also useful, if you use code in analysis or modelling, as I do.

Git is most famous as the creation of Linus Torvalds in support of the development of the Linux operating system. For developers version control is a fundamental activity which crosses all boundaries of domain and language. Git is one of the more recent examples in a line of version control systems, my former colleague Francis Irving wrote very nicely about this subject.

My adventures with source control extend over 20 years although it is fair to say that I didn’t really use them in anger until I worked at ScraperWiki. There my usage moved from being a safety line for work that only really impacted me, to a collaborative tool. I picked up my usage of git through pairing with other people, and through explicitly stated conventions for using git in a developing team. Essentially one of the other developers told us off if he thought our commit messages were not up to scratch! This is a good thing. This culturally determined use of git is important in collaborative environments.

My interest in git has recently been re-awoken for a couple of reasons: my new job means I’m doing a lot of coding, and I discovered GitKraken which is a blingy new git client. I’ve not used a graphical git client before but GitKraken is very pretty and the GUI invites you to discover more git functionality. Sadly it doesn’t work on my work PC, and once it leaves beta I have no idea what the price might be.

Pro Git starts with an introduction to git and the basics of getting up and running. It then goes on to describe how to use git in collaborative environments, how to use git with GitHub and then more advanced topics such as how to write hooks, and use git as a client to Subversion (an earlier source control system). Coverage feels pretty complete to me, it’s true that you might resort to Stackoverflow to answer some questions but that’s universally true in coding.

The book finishes with a chapter on git internals, what is going on under the hood as you issue commands. Git has a famous division between “porcelain” and “plumbing” commands. Plumbing is what really get things done, low level commands with somewhat opaque meaning whilst porcelain is the stuff you use day to day. The internals chapter starts by showing how the plumbing works by reproducing the effects of some of the porcelain commands. This is surprisingly informative, and built my confidence a bit – I always have some worry that I will lose something irrevocably by issuing the wrong command in git. These dangers exist but Pro Git is clear where they lie.

Here are a couple of things I’ve already started using on reading this book:

git log –since=1.week

– filter the log to just show the commits made in the last week, other time options are available. Invaluable for weekly reporting!

git describe

– make a human readable (sort of) build number based on the most recent tag and how far you are along from it.

And there are some things I used to wonder about. First of all I should consider commits as a tree structure, with branches pointers to particular commits. In this context HEAD^ refers to the parent commit of the current HEAD, or latest commit. HEAD~2 refers to the grandparent of the current commit, and so on. I now have some appreciation of soft, mixed and hard resets. Hard is bad, it could lose your work!

I now know why git filter-branch was spoken of in hushed tones in the ScraperWiki office, basically because it allows you to systematically rewrite the history of a repository which is sort of really wrong in source control.

Pro Git is good in outlining not only what you can do but also what you should do. For example, one has the choice with git to merge different branches or to carry out a rebase. I’d always been a bit vague on the difference between these two things but Pro Git explains clearly, and also tells you when you shouldn’t use rebase (when other people have seen the commits you are rebasing).

My electronic edition on Kindle does suffer from the occasional glitch with some paragraphs appearing twice but the writing is clear and natural. Pro Git can’t be beaten for the price and it is probably worth the £32 Amazon charge for a paper copy.

Jan 06 2016

Book review: A History of the 20th Century in 100 Maps by Tim Bryars an Tom Harper

A History of the 20th Century in 100 Maps by Tim Bryars and Tom Harper is similar in spirit to The Information Capital by James Cheshire and Oliver Uberti: a coffee table book pairing largely geographic visualisations with relatively brief text. Looking back I see that The Information Capital was a birthday present from Mrs SomeBeans, 100 Maps was a Christmas present from the same!

A History of the 20th Century in 100 Maps by Tim Bryars and Tom Harper is similar in spirit to The Information Capital by James Cheshire and Oliver Uberti: a coffee table book pairing largely geographic visualisations with relatively brief text. Looking back I see that The Information Capital was a birthday present from Mrs SomeBeans, 100 Maps was a Christmas present from the same!

The book is published by the British Library. The maps are wide ranging in scale, scope and origin. Ordnance Survey maps of the trenches around the Battle of the Somme nestle against tourist maps of the Yorkshire coast, and tourist maps of Second World War Paris for the Nazi occupiers. The military maps are based on the detailed work by organisations like the Ordnance Survey whilst other maps are cartoons of varying degrees of refinement, “crude” being particularly relevant in the case of he Viz map of Europe!

War and tourism are recurring themes of the book. In war maps are used to plan a battle, conduct a post mortem and as propaganda. It is not uncommon to see newspaper cartoon style maps with countries represented by animals, or breeds of dog. In tourism maps are used as marketing – the presentation and “incidental” details at least as important as the navigation. Sometimes the “tourism” relates to state events. Not quite fitting into either war or tourism is the crudest map in the book, a sketch of Antarctica on the back of a menu card, made by Shackleton as he tried to persuade his neighbour at a formal dinner to help fund his expedition.

A third category is the economic map showing, for example the distribution of new factories around the UK in the post-war period or the exploitation of oil in the Caribbean or the North Sea. This maps were published sometimes for education, and sometimes as part of a prospectus for investment or a tool of persuasion.

The focus of the book is really on the history of Britain rather than maps, this is a useful bait-and-switch for people like me who are ardent followers of maps but not so much of history. It struck me the impact that the two world wars of the 20th century had on UK. Not simply the effect of war itself but the rise of women in the workforce, and independence movements in the now former colonies, homes for heroes, nationalisation – the list goes on. It seems the appetite for all these things was generated during the First World War but they were not satisfied until after the Second. The First World War also saw the introduction of prohibitions on opium and cocaine, prior to this they were seen as the safe recreation of the upper middle classes during the war they were seen as a risk to the war effort. America had lead the way with the prohibition of drugs, and later alcohol, seeing them as a vice of immigrants and working classes.

I didn’t realise there were no controls on immigration to the UK until the 1905 Alien Act, this nugget is found in a commentary on a map entitled “Jewish East London”. This map was based on Charles Booth’s social maps of London and published by the Toybnee Trust. The Jewish population had been increasing over the preceding 25 years following pogroms and anti-Semitic violence in the Russian Empire. The Trust published the map alongside articles for and against immigration controls but, as the authors point out, the map is designed with its use of colouring and legends to make the case against.

100 maps is not all about the real world, E.H. Shepard’s map of the Hundred Acre Wood makes it in, as well Tolkien’s map of Middle Earth. In both cases the map provides an overview of the story inside. I rather like the map of Hundred Acre Wood map with “Eeyores Gloomy Place – rather boggy and sad”, and a compass rose which spells out P-O-O-H.

The book is well-made and presented but in these times of zoomable online maps physical media struggle to keep up. The maps are difficult to study in detail at the A4 size in which they are presented. 100 maps makes some comment on the technical evolution of maps, not so much in the collection of data, but in the printing and presentation process. It is only in the final decades of the century that full colour printed maps become commonplace. It is around 1990 that the Ordnance Survey becomes fully digital in its data collection.

All in all a fine present – thank you Mrs SomeBeans!

Dec 31 2015

Review of the year: 2015

Another year comes to an end and it time to write my annual review. As usual my blog has been a mixture, with book reviews the most frequent item. I also wrote a bit about politics and some technology blog posts. You can see a list of my posts this year on the index page. My technology blog posts are about programming, and the tools that go with it – designed as much to remind me of how I did things as anything else.

My most read blog post this year was a technical one on setting up Docker to work on a Windows 10 PC – it appears to have gone out in an email to the whole Docker community. For the non-technical reader, Docker is like a little pop up workshop which a programmer can take with them wherever they go, all their familiar tools will be found in their Docker container. It makes sharing the development of software, and deploying it different places, much easier.

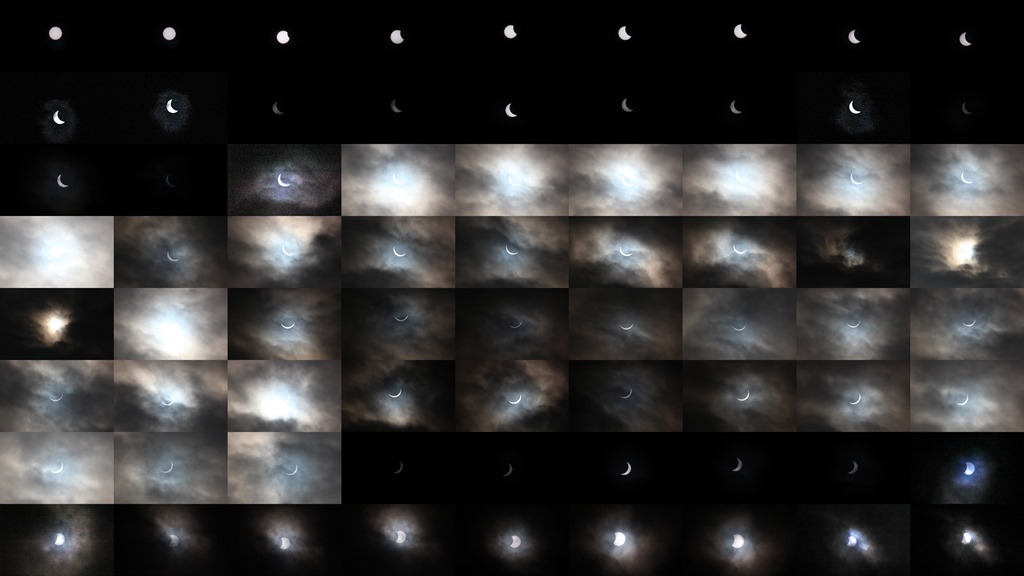

Actually my most read blog post this year was the review of my telescope, which I wrote a few years ago – it clearly has enduring appeal! Sadly, I haven’t made much use of my telescope recently but I did reuse my experience to photograph the partial eclipse, visible in north west England in March. I took a whole pile of photographs and wrote a short blog post. It is a montage of my eclipse photos which graces the top of this post. I think the surprising thing for me was how long the whole thing took.

In book reading there was a mixture of technical books which I read in relation to my work, and because I am interested. My favourite of these was High Performance Python by Micha Gorelick and Ian Ozsvald, which lead me to thinking more deeply about my favoured programming language. I read a number of books relating to the history of science. The Values of Precision by edited by M. Norton Wise stood out – this was an edited collection about the evolution of precision in the sciences since population studies in pre-Revolutionary France. Many of the themes spoke directly to my experience as a scientist, and it was interesting to read about them from the point of view of historians. Andrea Wulf’s biography of Alexander von Humboldt was also very good.

There was a General Election this year, which led to a little blogging on my part and then substantial trauma (as a Liberal Democrat). I stood for the local council in the “Chester Villages” ward, where I beat UKIP and the Greens (full results here), sadly the Chester Liberal Democrats lost their only seat on the Cheshire West and Chester Council.

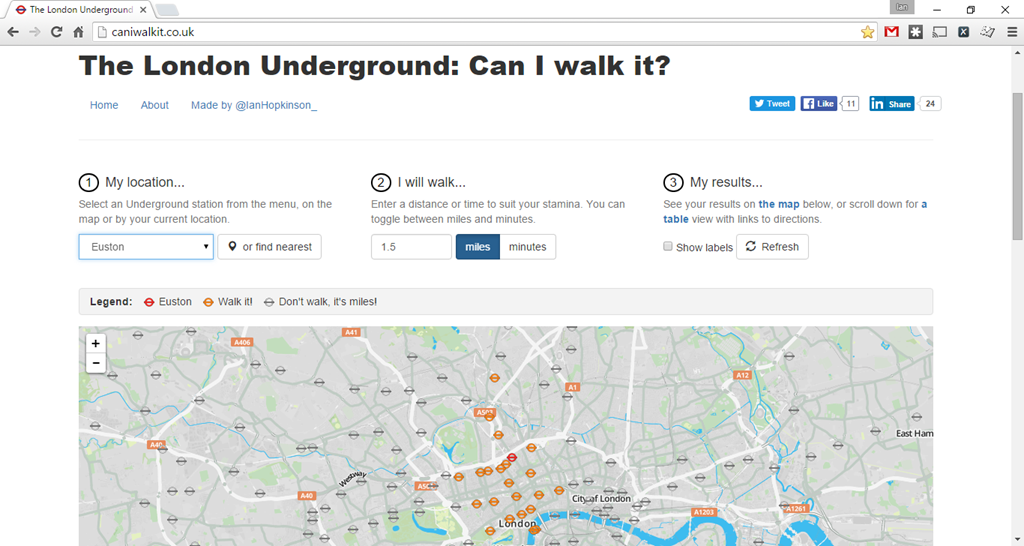

I did a couple of little technical projects for my own interest over the year. I made my London Underground – Can I walk it? tool which helps the user decide whether to walk between London Underground stations, the distance between them often being surprisingly short in the central part of London. The distinguishing features of this tool is that it is dynamic, and covers walking distances which are not just nearest neighbour of the current line. You can find the website here. This little project incorporated a number of bits of technology I’d learnt about over the past few years, and featured help from David Hughes on the design side – you can see the result bellow.

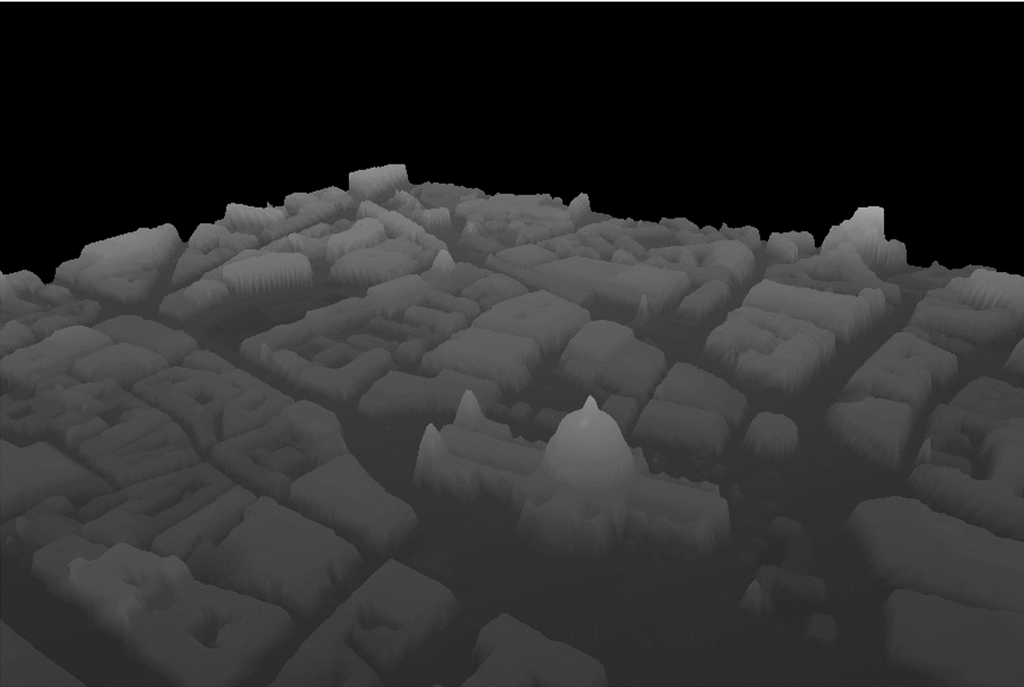

My second project was looking at the recently released LIDAR data from the Environment Agency, I wrote about it here. LIDAR is a laser technique for determining the height of the land surface (or buildings, if they are in the way) to a high resolution – typically 1 metre but down to 25cm in some places. The data cover about 85% of England. The Environment Agency use the data to help plan flood and coastal defences, amongst other applications. I had fun overlaying the LIDAR imagery onto maps, and rendering it in 3D, below you can see St Paul’s cathedral rendered in 3D.

I changed job in the Autumn, moving from ScraperWiki in Liverpool to GB Group in Chester. In my new job I’m spending my days playing with data, and attending virtually no meetings – so all good there! Also my commute to work is a 25 minute cycle which I really enjoy. But I really value the experience I got at ScraperWiki. As a startup with an open source mentally I learnt lots of new things and could talk about them. I also got to work with some really interesting customers. It brought home to me how difficult it is to make a business work, it’s not enough just to do something clever – somebody has to pay you enough to do it – and that’s actually the really hard part.

I wrote a now obligatory holiday blog post. We stayed in Portinscale, just outside Keswick for our holiday at a time when the weather was rather better. The highlight of the trip for me was the Threlkeld Mining Museum, a place where older men collect old mining equipment for their entertainment and that of small children. Although Allan Bank in Grasmere was a close second, Allan Bank is a laid back hippy commune style National Trust property. Below you can see a view of Derwent Water to Catbells from Keswick.

A couple of things I haven’t blogged about: I started running in May and since then I’ve gone from running 5km in 34 minutes to 5km in 24 minutes, I’ve also lost 10kg. I should probably write a blog about this, since it involves data collection. There are some technical bits and pieces I’d quite like to write about (Python modules and sqlite) either because I use them so often or they’ve turned out to be useful. The other thing I haven’t written about is my CBT.

Dec 30 2015

Book review: Risk assessment and Decision Analysis with Bayesian Networks by N. Fenton and M. Neil

As a new member of the Royal Statistical Society, I felt I should learn some more statistics. Risk Assessment and Decision Analysis with Bayesian Networks by Norman Fenton and Martin Neil is certainly a mouthful but despite its dry title it is a remarkably readable book on Bayes Theorem and how it can be used in risk assessment and decision analysis via Bayesian Networks.

As a new member of the Royal Statistical Society, I felt I should learn some more statistics. Risk Assessment and Decision Analysis with Bayesian Networks by Norman Fenton and Martin Neil is certainly a mouthful but despite its dry title it is a remarkably readable book on Bayes Theorem and how it can be used in risk assessment and decision analysis via Bayesian Networks.

This is the “book of the software”, the reader gets access to the “lite” version of the author’s AgenRisk software, the website is here. The book makes heavy use of the software in terms of presenting Bayesian networks and also in the features discussed. This is no bad thing, the book is about helping people who analyse risk or build models to do their job rather than providing a deeply technical presentation for those who might be building tools or doing research in the area of Bayesian Networks. With access to AgenRisk the reader can play with the examples provided and make a rapid start on their own models.

The book is divided into three large sections. The first six chapters provide an introduction to probability, and the assessment of risk (essentially working out the probability of a particular outcome). The writing is pretty clear, I think its the best explanation of the null hypothesis and p-values that I’ve read. The notorious “Monty Hall” problem is introduced. It then goes into Bayes’ theorem in more depth.

Bayes Theorem originates in the writings of Reverend Bayes published posthumously in 1763. It concerns conditional probability, that is to say the likelihood that a hypothesis H is true given evidence E written P(H|E). The core point being that we might have the inverse of what we want: an understanding of the likelihood of evidence given a hypothesis, P(E|H). Bayes Theorem gives us a route to calculate P(H|E) given P(E|H), P(E) and P(H). The second benefit here is that we can codify our prejudices (or not) using priors. Other techniques deny the existence of such priors.

Bayesian statistics are often put in opposition to “frequentist” statistics. This division is sufficiently pervasive that starting to type frequentist, Google autocompletes with vs Bayesian! There is also an xkcd cartoon. Fenton and Neil are Bayesians and put the Bayesian viewpoint. As a casual observer of this argument I get the impression that the Bayesian view is prevailing.

Bayesian networks are structures (graphs) in which we connect together multiple “nodes” of Bayes theorem. That’s to say we have multiple hypothesis with supporting (or not) evidence which lead to a grand “outcome” or hypothesis. Such a grand outcome might be the probability that someone is guilty in a criminal trial or that your home might flood. These outcomes are conditioned on multiple pieces of evidence, or events, that need to be combined. The neat thing about Bayesian Networks is that we can plug in what data we have to make estimates of things we don’t know – regardless of whether or not they are the “grand outcome”.

The “Naive Bayesian Classifier” is a special case of a Bayesian network where the nodes are all independent leading to a simple hub and spoke network.

Bayesian networks were relatively little used until computational developments in the 1980s meant that arbitrary networks could be “solved”. I was interested to see David Speigelhalter’s name appear in this context, arguably he is one of few publically recognisable mathematicians in the UK.

The second section, covering four chapters, goes into some practical detail on how to construct Bayesian networks. This includes recurring idioms in Bayesian Networks which they name the cause consequence idiom, measurement idiom, definitional/synthesis idiom and induction idioms. The idea is that when one addresses a problem, rather than starting with a blank sheet of paper, you select the appropriate idiom as a starting point. The typical problem is that the “node probability tables” can quickly become very large for a carelessly constructed Bayesian network, Risk assessment’s idioms help reduce this complexity.

Along with idioms this section also covers how ranked and continuous scales are handled, and in particular the use of dynamic discretization schemes for continuous scales. There is also a discussion of confidence levels which highlights the difference in thinking between Bayesians and frequentists, essentially the Bayesians are seeking the best answer given the circumstances whilst the frequentists are obsessing about the reliability of the evidence.

The final section of three chapters gives some concrete examples in specific fields: operational risk, reliability and the law. Of these I found the law examples the most pertinent. Bayes analysis fits very comfortably with legal cases, in theory, a legal case is about assigning a probability to the guilt or otherwise of a defendant by evaluating the strength (or probability that they are true) of evidence. In practice one gets the impression that faulty “commonsense” can prevail in emotive cases, and experts in Bayesian analysis are only brought in at appeal.

I don’t find this surprising, you only have to look at the amount of discussion arising from the Monty Hall problem to see that even “trivial” problems in probability can be remarkably hard to reason clearly about. I struggle with this topic myself despite substantial mathematical training.

Overall a readable book on a complex topic, if you want to know about Bayesian networks and want to apply them then definitely worth getting but not an entertaining book for a casual reader.